Why Enterprise Agentic AI Autonomy Fails in Production

Agentic AI rarely breaks in the demo. It starts to strain when it enters the real operating workflow under business pressure.

That is where many enterprise teams are getting stuck now. Early pilots look promising. The agent completes tasks, responds well, and seems capable enough to move ahead. Then production reality takes over.

Work has to move across systems, approvals, policy checks, exceptions, and handoffs that were never built for autonomous execution. What looked efficient in a controlled setting starts creating rework, extra supervision, and uncertainty once the business depends on it daily.

That is why so many enterprise initiatives stall at the edge of scale. The issue is often described as an AI problem, but in practice the larger gap is operational. Many organizations are trying to expand agent capability before they have the workflow design, orchestration, governance, and observability needed to support it safely.

What matters at this stage is not more autonomy for its own sake. You need execution that holds up in production, stays inside control boundaries, and keeps work moving without repeated manual intervention. That only happens when the operating foundation is in place before autonomy is expanded.

This article explains why enterprise agentic AI breaks down operationally and what must be built first to make it scalable, governed, observable, and dependable in real enterprise environments.

Agentic AI Workflow Failures: Lack of Workflow Redesign in Enterprise Operations

This is one of the most common patterns in enterprise rollouts. An agent gets introduced into an existing process with the expectation that execution will become smoother.

The task gets completed, but the process around it does not improve. Handoffs remain unclear. Approvals still slow progress. Exceptions continue to move between systems and people.

What happens next is revealing under production pressure.

The agent starts operating inside a workflow that was never designed for autonomous execution. The original process still depends on manual checkpoints, fragmented context, and loosely defined ownership. Instead of removing friction, the agent makes that friction more visible. Work gets done, but it does not move in a dependable way.

This creates operational strain. Teams start rechecking outputs. Extra validation steps get added. Some parts of the workflow move faster, while others turn into bottlenecks. The overall system feels uneven, even though the underlying capability has improved.

At that point, it becomes clear that the issue is not how well the agent performs on its own. The issue is how the workflow is structured around that performance. Without redesigning the process to support coordinated, controlled execution, adding more agents only increases complexity.

For agentic AI to scale in a meaningful way, the starting point is not the agent itself. It is the workflow. Until that is redesigned for clarity, ownership, and clean execution paths, the system will continue to behave unpredictably, no matter how capable the technology becomes.

Agentic AI Governance Failures: Missing Policy Controls and Human Oversight in Production

This is where enterprise confidence starts to weaken. Actions are executed, decisions are made, yet there is no boundary around what the system should do, what it must not do, and when a human needs to step in. What looked efficient starts to feel exposed once those actions carry business consequences.

In many deployments, governance is treated like a later-phase fix. The attention stays on capability and speed, while control logic remains incomplete. As the agent begins working across workflows, the cracks become easier to see. Approval paths are loosely enforced. Escalation rules vary by case. Ownership becomes harder to trace when an outcome falls outside expectations.

That creates a slower, defensive operating pattern. Teams insert manual checkpoints to reduce risk. Decisions are reviewed after execution. In some cases, automation gets rolled back because the system cannot be trusted to stay within acceptable limits. What was supposed to accelerate work begins to create friction under review layers.

The real issue is not random behavior. It is that the surrounding model does not clearly define how decisions should be governed, when exceptions should be escalated, or who remains accountable. Without those boundaries, every action introduces uncertainty and every exception pulls people back into recovery.

For agentic AI to scale in operations, governance has to be designed into the system from the beginning. Clear policy controls, defined approval logic, and human oversight are what make autonomous execution safe, predictable, and usable in real workflows.

Agentic AI Observability and Evaluation Gaps: Lack of Traceability in AI Decision Flows

The system produces an outcome, but you cannot clearly see how it got there.

That is where trust starts to erode in enterprise environments. A result may look correct on the surface, but the path behind it remains difficult to inspect. Teams cannot easily see which inputs were used, which tool calls were made, how the workflow progressed, or where the decision started to drift. What appears capable in a demo becomes harder to trust when the business has to verify it under real operating conditions.

As agentic AI takes on more responsibility, that lack of visibility creates operational risk. When a result needs to be checked, teams spend time reconstructing steps that were never captured in a usable way. When something breaks, it becomes difficult to tell whether the issue came from the model, the prompt, the data, the tool interaction, or the orchestration logic. Debugging slows down, reviews get heavier, and confidence drops with each unexplained result.

The response is usually predictable. Extra approvals get added. Teams limit usage to lower-risk workflows. Scale slows because reliability cannot be measured clearly over time. The challenge is not only getting the right answer. It is being able to trace, inspect, evaluate, and improve how that answer was produced. Without observability built into the system, agentic AI stays difficult to control and even harder to scale responsibly.

Operational Foundations for Agentic AI: What to Build First for Scalable and Responsible Enterprise AI

To make agentic AI reliable in production, your system needs to move from isolated execution to controlled, connected, and observable workflows.

What scales in enterprise environments is not the agent by itself. It is the operating foundation around it. When that foundation is in place, execution becomes more predictable, coordination improves, and confidence builds across the workflow.

There are five core capabilities that need to be in place before agentic AI can scale effectively.

- Workflow-first structure for execution clarity

Your workflows need to be defined before agents are introduced. That includes decision points, approvals, inputs, outputs, and exception paths. When the structure is clear, the agent works inside a predictable flow instead of trying to compensate for an undefined process.

- Orchestration layer for cross-system coordination

Work needs to move reliably across systems, tools, and teams. An orchestration layer helps each step connect to the next, manages dependencies, and handles transitions without breaking the workflow.

- Governance framework for controlled action

Every action should operate within defined boundaries. Approval rules, escalation paths, and control mechanisms help keep execution safe, reviewable, and aligned with business requirements.

- Observability and evaluation for system visibility

You need visibility into how decisions are made and how workflows perform over time. Tracking execution paths, evaluating outcomes, and identifying failure points helps maintain reliability and improve performance.

- Operating model for ownership and accountability

Clear ownership is required for monitoring, intervention, and continuous improvement. Without defined responsibility, even a well-designed system becomes harder to manage.

When these elements are built together, agentic AI moves from experimental capability to operational system. Execution becomes more consistent, risk becomes easier to control, and scale becomes practical instead of uncertain. The harder part for most enterprises is not understanding these foundations in theory.

It is applying them across real workflows, systems, approvals, and governance requirements without adding more friction. That is why many organizations turn to a best Enterprise AI service provider when they need to move agentic AI from something that works in parts to something the business can rely on end to end.

Enterprise Agentic AI Readiness Framework: Key Questions Before Production Deployment

Before moving agentic AI deeper into production, test whether the operating foundation is actually ready.

Ask five direct questions. Has the workflow been redesigned for autonomous execution instead of simply placing an agent on top of the existing process?

Can work move across systems without manual reconnects, broken handoffs, or repeated intervention?

Are approval rules, escalation paths, and action boundaries clearly defined before the agent is allowed to act?

Can your team trace decisions, inspect failures, and measure reliability over time?

Is ownership clear for monitoring, intervention, and continuous improvement?

When that foundation is built first, agentic AI stops feeling unpredictable and starts becoming something the business can trust in real production conditions.

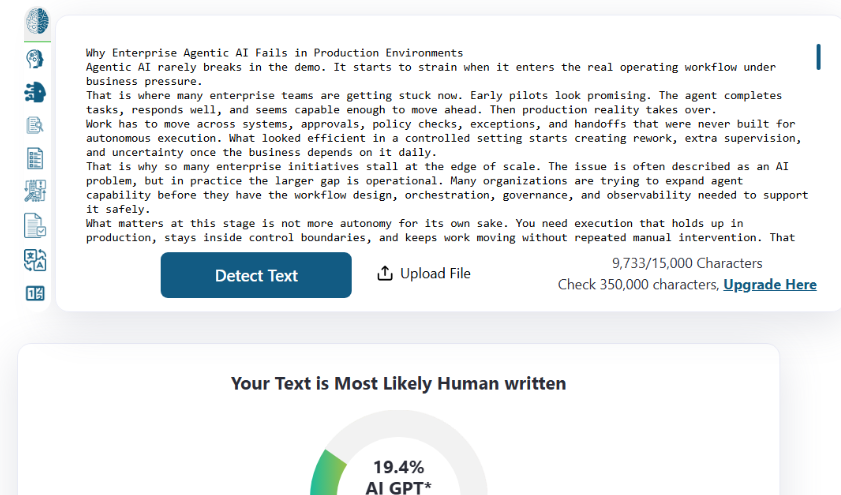

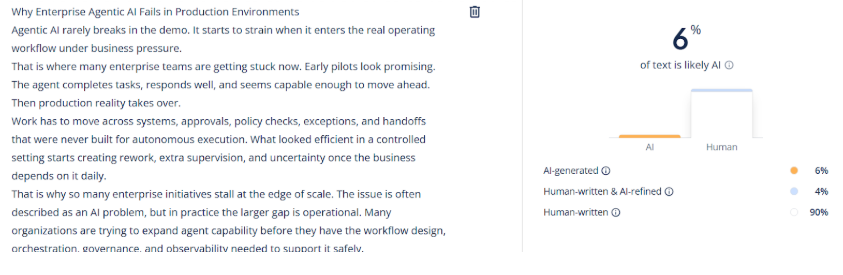

AI Content Check